Campus & Community

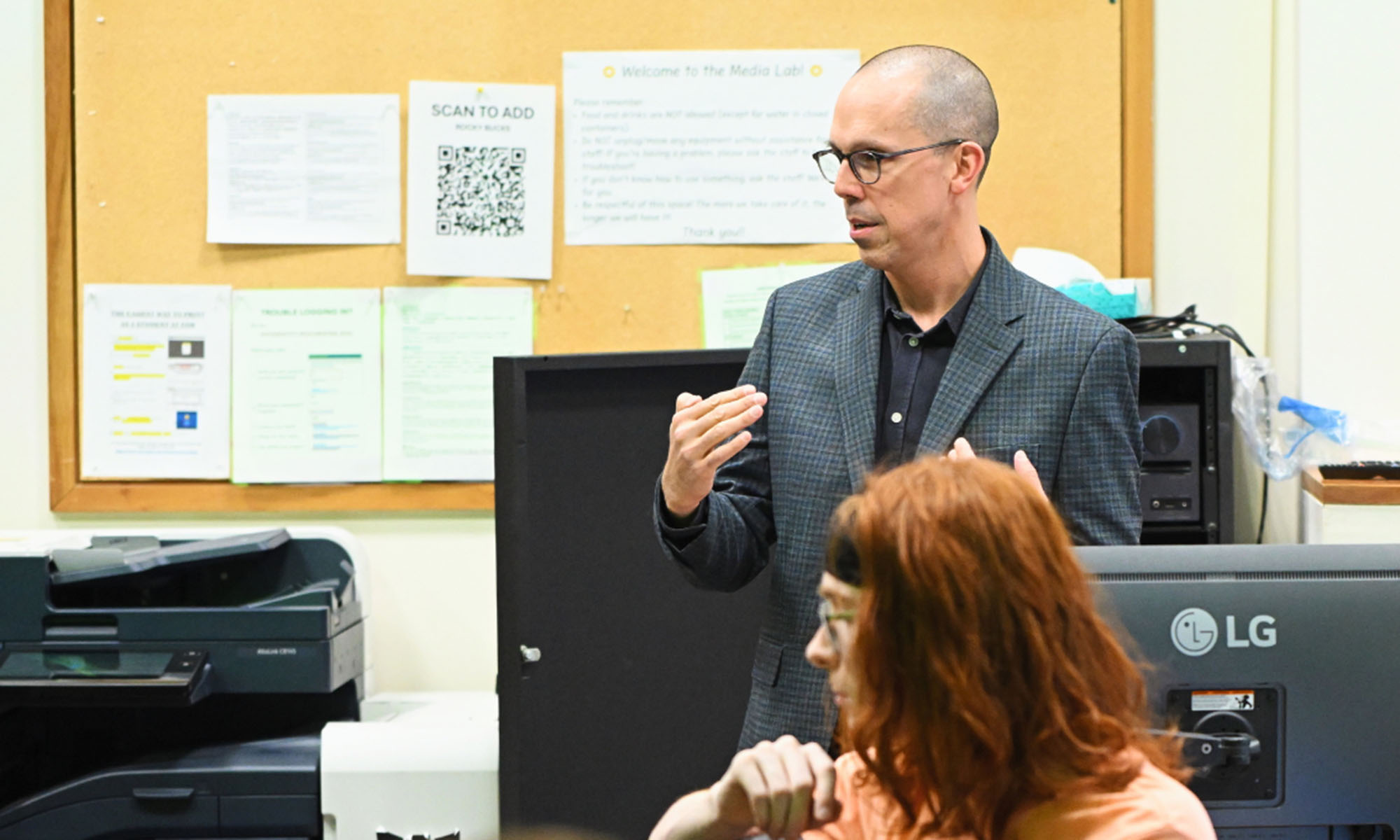

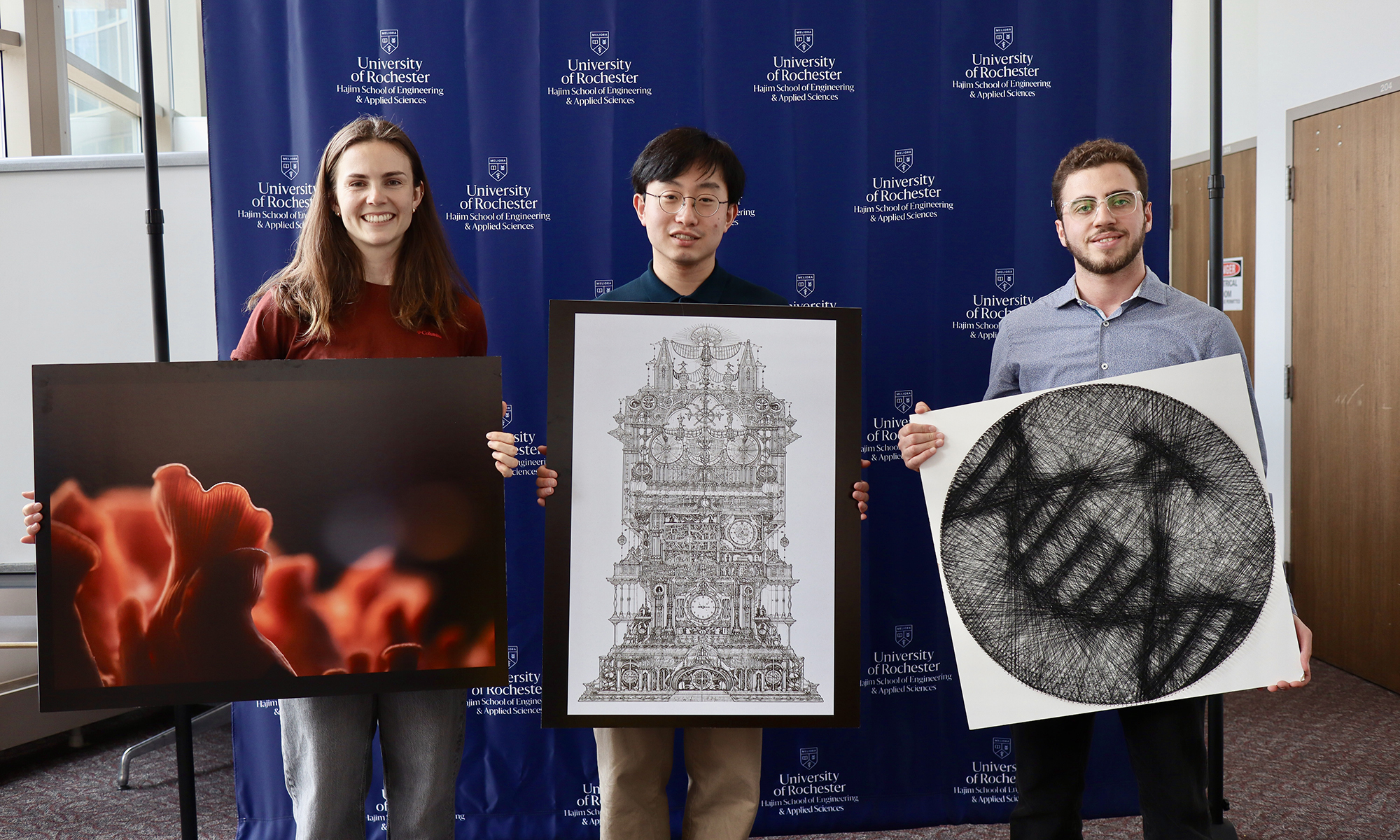

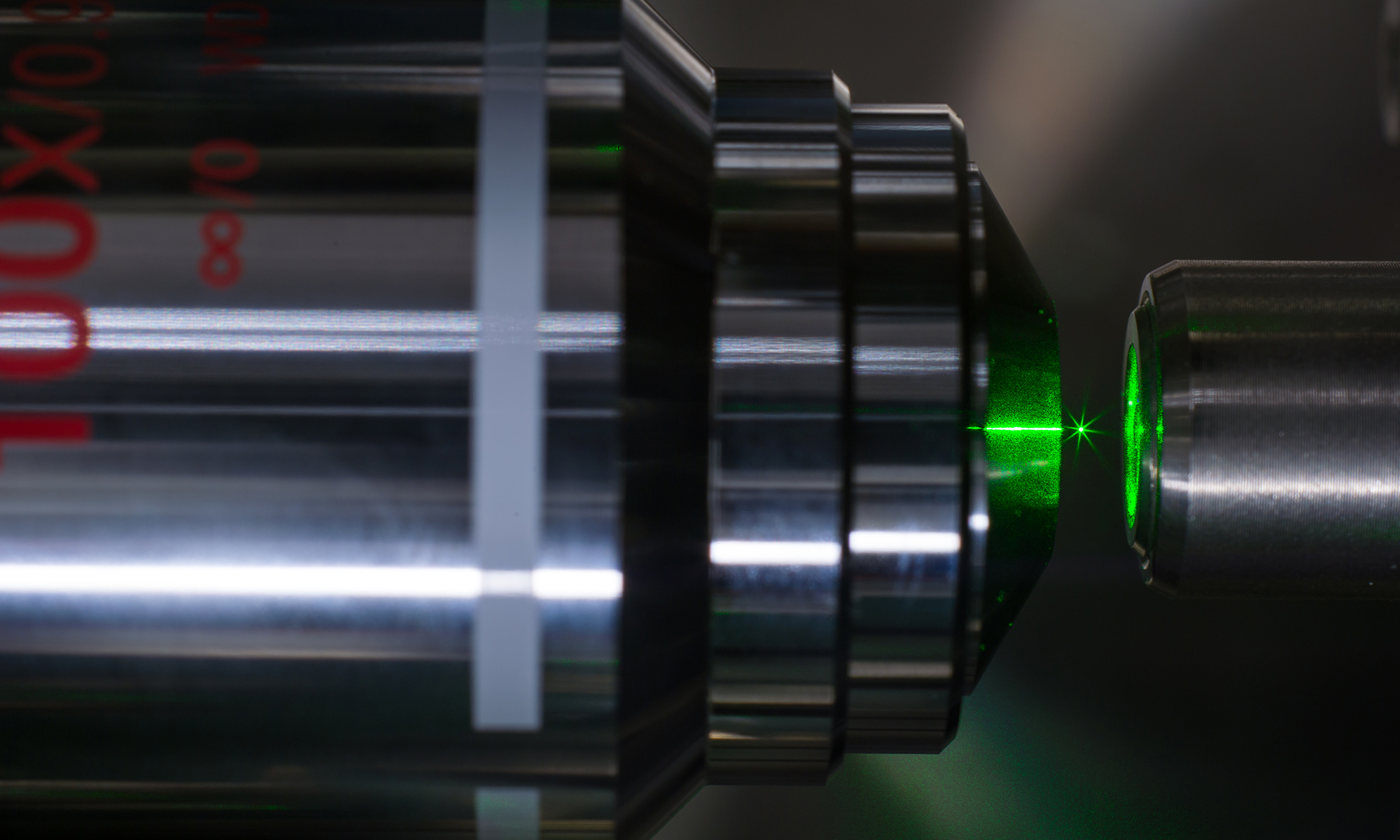

From mushrooms to molecules, science becomes art

The annual Ed and Barbara Hajim Art of Science Competition showcases how scientific discovery takes visual form across disciplines.

Honey Meconi’s scholarship on Saint Hildegard of Bingen advised the Vatican’s exhibit featuring FKA Twigs, Brian Eno, Patti Smith, and others.

At the University of Rochester, there’s no need to “pick a lane.” Here, art meets analytics, science meets soul, and curiosity leads to unexpected outcomes. It’s a home where thinkers and doers, scholars and starters, healers and leaders come together to create a world that’s ever better.