University administrators and faculty weigh in on the pros and cons of the newest online learning tool.

ChatGPT, the artificial intelligence chatbot, continues to have internet users abuzz, given its ability to answer prompts on a stunning variety of subjects, to create songs, recipes, and jokes, to draft emails, and more.

“It’s amazing to have this technology do in seconds what it takes many of us hours to do,” says Deborah Rossen-Knill, executive director of the University of Rochester’s Writing, Speaking, and Argument Program and a professor of writing studies. “There’s just an endless set of possibilities.”

Those endless possibilities, however, have faculty and administrators in higher education expressing anxiety as well as awe, because ChatGPT also can write essays and code, answer homework questions, and solve math problems.

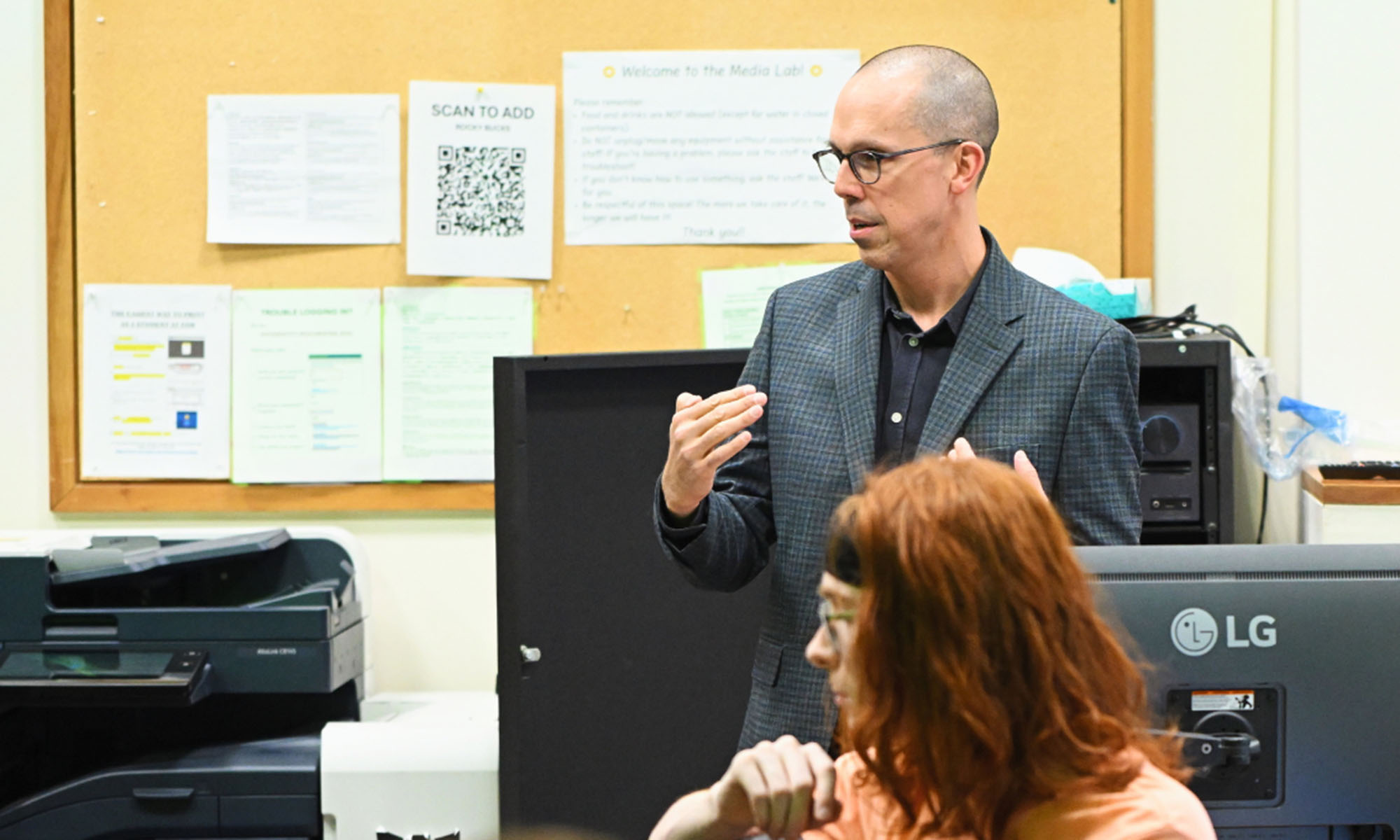

“We’re all trying to figure out how it fits into the existing landscape of higher education,” says Rachel Remmel, assistant dean and director of the University’s Teaching Center. “Everyone is talking about it.”

ChatGPT—the GPT stands for “generative pretrained transformer”—was launched by OpenAI in November 2022. It reached one million users in five days, according to OpenAI’s cofounder Sam Altman, and surpassed 100 million after two months, making it the fastest-growing consumer application in history.

And more is coming. Google is nearing the public release of a rival to ChatGPT called Bard.

“The one thing I’m sure of is ChatGPT and others like it are here to stay,” says Christopher Kanan, an associate professor in the Department of Computer Science. “And we, as educators, will just have to deal with that.”

Rochester faculty and administrators offered their thoughts on how they’re dealing with ChatGPT—and how it may affect teaching and learning down the road.

How will ChatGPT change the landscape of higher education?

In short, substantially.

Says Greer Murphy, director of academic honesty for Arts, Sciences & Engineering, “I think it has the potential to be extremely game-changing. It’s something that will continue to evolve, and those of us in higher ed will keep asking questions about principles and usages.”

Kanan, who is currently teaching an undergraduate course on how to build systems like ChatGPT, believes such systems are “going to be everywhere and be pervasive.”

“This is just Day One,” he says. “Improvements are being made as we speak. You think of earlier disruptive technology like the calculator and spellcheck. This is like that, only on ultra, ultra steroids. It can do so much more.”

Some faculty have already altered their teaching in the wake of ChatGPT’s release

Several faculty members have incorporated ChatGPT into assignments, primarily as a means of exposing the AI chatbot’s limitations.

Jonathan Herington, an assistant professor in the Department of Philosophy, used ChatGPT as part of an assignment this semester. He asked students to cowrite an essay with the chatbot on a question that would challenge the technology’s capabilities, such as citations from obscure texts or knowledge of readings published after 2020.

“I then had the students reflect upon the process of cowriting: what worked, what was harder, whether they would use this in the future,” Herington says. “I think it’s important for students to explore the capacities and limits of these models. They aren’t magic. They do some things very well, but lots of higher-level tasks—the ones we care about in upper-level philosophy—are beyond them.”

Liz Tinelli, an associate professor and the graduate writing project coordinator in the Writing, Speaking, and Argument Program, uses ChatGPT in a few courses. In Impacts of Engineering, students ask the AI chatbot to identify advantages and disadvantages of artificial intelligence. And in Communicating Your Professional Identity, students draft an abstract for a technical project, prompt the chatbot to modify it for different audiences, and then compare versions based on writing style and content.

“I’m asking students to explore and critically examine the implications of authorship and effective academic writing when using digital tools such as GPT,” Tinelli says. “In Writing for a Digital World, students explore the ethical complexities of authorship and attribution when generating texts using AI. And in Impacts of Engineering, we aren’t just asking for output. Students are critically engaging with output to learn how to ask better questions and identify specific audiences who care about the answers.”

To fulfill these learning objectives, Tinelli says students must be active participants in their own learning—not passive consumers of AI-generated information. “Ultimately, my goal is to help students critically evaluate and navigate the effective and responsible use of these tools,” she says.

Adam Purtee ’19 (PhD), an assistant professor of computer science, has returned to in-class quizzes for the first time since before the onset of the COVID-19 pandemic. “I’m changing all of my assignments to involve more high-level concepts and more integrative knowledge,” he says.

Purtee’s message is clear: “I want the student to know this information. The best way to do that is to get them alone in a room with a pencil and see what happens.”

Likewise, Kanan introduced his first in-class, on-paper midterm since pre-pandemic last fall. “It just becomes harder to sort out who knows what and who’s getting help from things like ChatGPT,” he says.

Remmel says Rochester professors are likely to react to ChatGPT in different ways depending on the familiarity of the instructor with the AI chatbot as well as the learning objectives for a given course.

“My hope is that professors and students will weigh the pros and cons for each course,” she says.

ChatGPT and other AI chatbots are in the ‘yellow’ category of the academic honesty team’s assessment of online learning tools. That means students should proceed with caution.

Why yellow, on the green-yellow-red scale? The categories were created by administrators in the Writing, Speaking, and Argument Program, but reviewed by the Academic Honesty office.

Says Murphy: “One reason is, there isn’t yet a consensus at Rochester or in higher education in general on how it may be used, and whether it’s mostly acceptable or unacceptable. It’s a tool we continue to react to. Putting it in the green category would signal it’s always OK to use it. Putting it in the red category would mean it’s never OK, which eliminates instructor discretion.”

AS&E recently created an instructors’ guide (PDF) for choosing when (or if) to use ChatGPT and other AI chatbots in the classroom—or give students permission to use it. The takeaway for faculty and instructors? Be practical, be transparent, be consistent, be responsible—and be mentors.

Spelling out the rules—and the rationale behind them—can help prevent academic dishonesty

“Some classroom goals will fit well with ChatGPT, while others will not,” Remmel says. “We want students to always check with instructors, so they don’t end up in trouble. Not every professor will have the same rules, but every professor should spell out the do’s and don’ts in terms of using ChatGPT and other AI chatbots.”

Murphy encourages faculty members to go further, spelling out “not just what your policies are, but why and how they’re related to your course outcomes. If writing is not a part of that outcome, maybe think about smart, ethical use of GPT as something you would permit.”

Murphy stresses that it’s important for professors to understand that these are tools many students will use—with or without appropriate guidance. “To me, it’s just not realistic to try preventing their usage,” she says. “It’s far more important to teach students how to use them safely and effectively.”

The upshots of using ChatGPT and other generative AI technologies in the classroom

Rossen-Knill says ChatGPT and other generative AI technologies can help students learn things that aren’t always easy to teach. “There are many ways to write a book summary, and it’s sometimes hard to help students see they have a range of choices and perspectives,” she says. “But in a classroom, using ChatGPT, you could create three versions summarizing Edgar Allan Poe’s ‘The Tell-Tale Heart’ that look incredibly different, and it immediately helps students see that you have choices. What works about this one? And that one?”

Other school aids have been around for a long time. Photomath allows students to take pictures of math problems and receive the answers. Humanities papers have been sold for years. So, what makes ChatGPT different?

“It’s not that it’s more sophisticated,” Remmel says. “It’s more accessible and will be more commonplace. It’s a free (for now), extremely fast chatbot using technology that will likely be built into many commonly used software products in the future. ChatGPT poses different questions than conventional cheating industry products, as AI chatbots will be used in many settings.”

The technology behind AI chatbots like ChatGPT is changing rapidly

Generative AI technology is evolving at an incredibly fast pace. Purtee recently created a “tricky” homework assignment for first-year students. He tested it against ChatGPT, and the bot couldn’t answer correctly.

Two weeks later, a student turned in the assignment. “Here’s my code,” the student told Purtee, “but I also checked to see what ChatGPT came up with, and that one is much shorter and looks better. Which should I turn in?”

Purtee was taken aback after checking the results.

“It’s a little surreal that ChatGPT can solve a problem it couldn’t solve two weeks before. In a sense, it’s stronger than some of my intro students,” he says. “This is not a criticism of my students. They’ve had only two weeks to absorb this knowledge and learn a new skill, while ChatGPT has been trained on practically the entire internet.”

In moments like these, ChatGPT can lead to a teaching moment. “Somehow it offends my humanity to have a teaching assistant grade this work by a bot,” Purtee told the student. “Look at your work and look at what ChatGPT did. See what you like about this solution and revise your work before turning it in.”

And it’s not just classrooms being disrupted by such AI technologies.

“There are many companies and start-ups creating these systems now,” Kanan says. “Broadly, generative AI methods, such as large language models, are one of the hottest topics in AI because they are having real-world impact. Blogging companies and news outlets are now using them to produce content, and this is going to happen more and more. These systems are changing industries, and I foresee that in a few years they will be used to create many products. One of my students just won a hackathon using ideas inspired by ToolFormer [a language model developed by researchers at Meta], which enabled control of a drone with natural language.”

AI chatbots: friends or foes in higher education?

“I believe 100 percent that it can be a positive,” Rossen-Knill says. “But I also feel 100 percent that some will choose to use it in a negative way. Still, the heart of teaching is working with those who truly want to learn and collaborating to create new things. I don’t think that will change.”

Kanan says the answer depends on what consumers do with the technology.

“There are so many amazing applications that will open doors,” he says. “But it’s going to be disruptive to so many things. Pedagogy is the one thing professors are worried about. I was talking to a professor at another university. He fed ChatGPT a test, and it got an 80.”

Remmel says educators and students eventually will reach a common approach to where AI chatbots fit in. “But things are still fluid at this point,” she says. “And as technologies keep evolving, there will no doubt be another technology that will come along as a disruptor in the future. I still have a fundamental belief in universities as a place where human communities come together to learn and innovate, so I don’t think ChatGPT poses any risk to higher education’s fundamental value, even if it might change how we approach doing some of our teaching and learning.”

Read more

A new way to prepare doctors for difficult conversations

A new way to prepare doctors for difficult conversations

University of Rochester researchers have developed SOPHIE, a virtual ‘patient’ that trains doctors in explaining end-of-life options.

Large language models could be the catalyst for a new era of chemistry

Large language models could be the catalyst for a new era of chemistry

Chemical engineer Andrew D. White explains why large language models like GPT-4 will open new frontiers for researchers.

Play a Bach duet with an AI counterpoint

Play a Bach duet with an AI counterpoint

BachDuet, developed by University of Rochester researchers, allows users to improvise duets with an artificial intelligence partner.