PhD Training Program on Augmented and Virtual Reality

Program Overview

This brochure is an example of our previous National Science Foundation Traineesship Program we offered our PhD students through the end of the grant, Spring 2025. This NSF-funded program focused on Interdisciplinary Graduate Training in the Science, Technology, and Applications of Augmented and Virtual Reality (AR/VR) has been a structured, multi-disciplinary PhD training program on AR/VR. This NSF Research Traineeship (NRT) program admits PhD students from multiple University of Rochester departments including:

- Biomedical engineering

- Brain and cognitive sciences

- Computer science

- Electrical and computer engineering

- Neuroscience

- Optics

Students admitted to the program take three new innovative courses and benefit from a variety of professional development mechanisms, including industry internships and immersive professional development encounters with industry leaders.

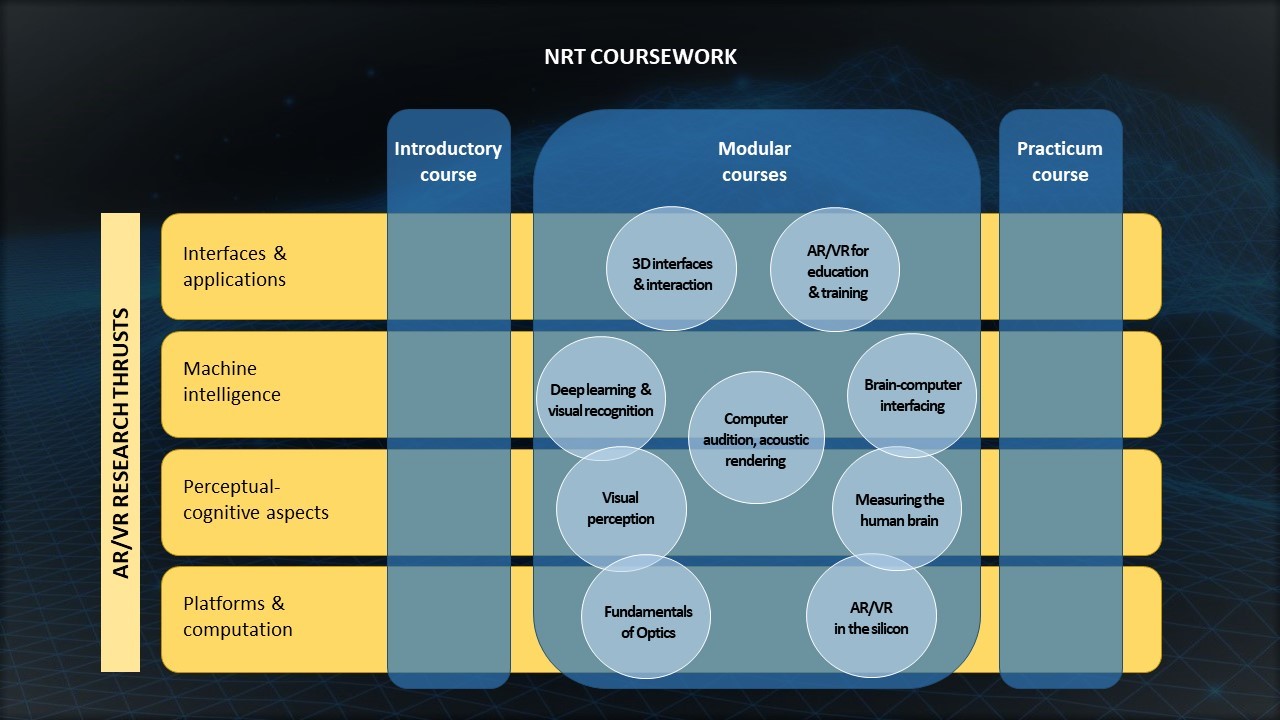

In addition students will also work on innovative, interdisciplinary research projects that focus on:

- AR/VR platforms and computation

- Perceptual-cognitive aspects of AR/VR design

- Machine intelligence for AR/VR systems

- AR/VR interfaces and applications

Upon completion of the training program requirements, students receive a certificate.

The program also offers one-year fully-funded fellowships to a subset of the trainees enrolled in the program. The funded fellowships can be offered to US citizens and permanent residents only.

Both funded and non-funded trainees are expected to complete all program requirements to receive a certificate.

Graduate students who do not enroll can still benefit from courses and other activities offered as part of this program.

More information:

New training in AR/VR tech gives Rochester doctoral students an edge

A $1.5 million grant from the National Science Foundation will establish a structured, well-rounded training program for University scholars applying augmented and virtual reality in health, education, design, and other fields.

Program Requirements

Student enrolled in the program agree to complete all of the following program requirements:

- Introductory course

- Modular course

- Practicum course

- Internship

- Undergraduate capstone project supervision

- AR/VR-related research

While in the program students will also participate in professional development encounters, and the annual program showcase and student-run conference. Each trainee will be assigned and guided by a faculty mentor.

Program Courses

The training program contains three new, innovative courses addressing the diverse backgrounds of incoming students, exposing them to AR/VR challenges and providing competency to work on AR/VR projects within multi-disciplinary teams as well as a variety of structured professional development activities.

The introductory course, *ECE 410: Introduction to Augmented and Virtual Reality, taught by multiple faculty in coordination, provides a broad introduction to AR/VR systems. Its main purpose is to build a common base of understanding and knowledge for all students in the program as well as to provide a foundation on which they can build their research.

The second course consists of three one-month long modules in a semester. Modules engage students in particular aspects of AR/VR or hands-on experience on AR/VR. A set of nine distinct modules will be offered, rotating some of the modules in and out each year.

In the third course, small teams of trainees from multiple departments will work together on a semester-long project on AR/VR with the guidance of a faculty involved in the program. The end products of this practicum course will be tangible artifacts that represent what the students have learned, discovered, or invented. Types of artifacts include:

- Research papers

- Patent applications

- Open-source software

- Online tutorials and videos for undergraduates, K-12 students, or the general public

Students should speak to their advisor to see if these courses will count towards the PhD degree requirements.

* ECE 410: Introduction to Augmented and Virtual Reality is also cross-listed as OPT 410, BME 410, BCSC 570, NSCI 415, CSC 413, and CVSC 534.

| Fall year one | Introductory course |

| Spring year one | Modular course |

| Summer year one | Unity programming course and practice |

| Fall year two | Practicum course |

| Summer year two | Internship |

| Years two through five | Annual program showcase and student-run conference |

| Years three and four | Undergraduate capstone project supervision |

| All Years | AR/VR-related research |

All Years | Professional development encounters |

Applying to the Program

Previously, any University of Rochester PhD student in electrical and computer engineering, optics, biomedical engineering, brain and cognitive sciences, computer science, or neuroscience, and interested in AR/VR research was welcome to apply for admission to the program as a trainee.

To apply, complete the application form (At this point, we are no longer taking applications for this program.)

Applying for Funded Fellowship - NOTE No New Applicants Were Accepted for Fall 2024 School Year. The information below is an example of what we offered previously.

The program also offers a small number of one-year fully-funded fellowships to a subset of the students enrolled in the program. Students who are not yet in the program, but are planning to enroll can apply to the program and the funded fellowship simultaneously.

These funded fellowships can be offered to US citizens and permanent residents only. Typically the funded fellowships will be offered to students who already have an assigned PhD advisor.

NOTE: We are not taking any applications at this time.

To apply for a funded one-year fellowship, complete the application for graduate AR/VR program (unless already a trainee enrolled in the program), and send the following documents to ARVR-PhDtraining@rochester.edu for a fellowship during the next academic year:

- CV

- Statement of purpose

- Transcripts

- One recommendation letter

People/Contacts

For any questions about this PhD training program, please contact ARVR-PhDtraining@rochester.edu.

Faculty

| Name | Role | Affiliation | Relevant Area of Expertise |

|---|---|---|---|

| Mujdat Cetin | PI | Department of Electrical and Computer Engineering | Image analysis, brain-computer interfaces |

| Jannick Rolland | Co-PI | Institute of Optics | Optical system design |

| Michele Rucci | Co-PI | Department of Brain and Cognitive Sciences | Visual perception |

| Zhen Bai | Co-PI | Department of Computer Science | Intelligent user interfaces |

| Zhiyao Duan | Faculty participation | Department of Electrical and Computer Engineering | Computer audition, audio signal processing |

| Daniel Nikolov | Research Scientist | Institute of Optics ODALab | Optical system design |

| Yukang Yan | Faculty participation | Computer Science | Human-Computer Interaction; Human Behavior Modeling; Virtual/Augmented Reality |

| Andrew White | Faculty participation | Department of Chemical and Sustainability Engineering | Application of AR/VR to higher education |

| Chenliang Xu | Faculty participation | Department of Computer Science | Computer vision, machine learning |

| Yuhao Zhu | Faculty participation | Department of Computer Science | Computer architecture, algorithm design |

| Edmund Lalor | Faculty participation | Department of Biomedical Engineering, Neuroscience | Analysis of sensory electrophysiology |

| Mark Bocko | Faculty participation | Department of Electrical and Computer Engineering, Department of Physics and Astronomy | Audio and acoustic signal processing, computer audition |

| David Williams | Faculty participation | Institute of Optics, Ophthalmology, Department of Biomedical Engineering, Department of Brain and Cognitive Sciences | The human visual system, physiological optics |

Program Evaluator

Chelsea BaileyShea, Compass Evaluation + Consulting LLC.

Program Coordinator

Kathleen DeFazio

Department of Electrical and Computer Engineering

kathleen.defazio@rochester.edu

(585) 275-1736

Trainees

See the NRT PhD Trainees page for a list of trainees.

External Advisory Committee

- Martin Banks, chair of UC Berkeley Vision Science Program

- Chris Chafe, director of Stanford Center for Computer Research in Music and Acoustics

- Kai-Han Chang, Senior Researcher at General Motors

- Barry Silverstein, director of optics and display research at Facebook Reality Labs/ Meta

- Paul Travers, president and CEO of Vuzix

Industry Partners

- Facebook/Meta

- Microsoft

- Nvidia

- Vuzix